Building an AI Agent from Scratch: The Smallest Useful Loop

I love learning new things, and my favourite way to learn is to start with something concrete and small. I don’t want to begin with a big framework or a complicated architecture diagram. I want to see something working with my own eyes, even if the first version is very limited.

That is why I’m starting a new series called Building an AI Agent from Scratch in this newsletter.

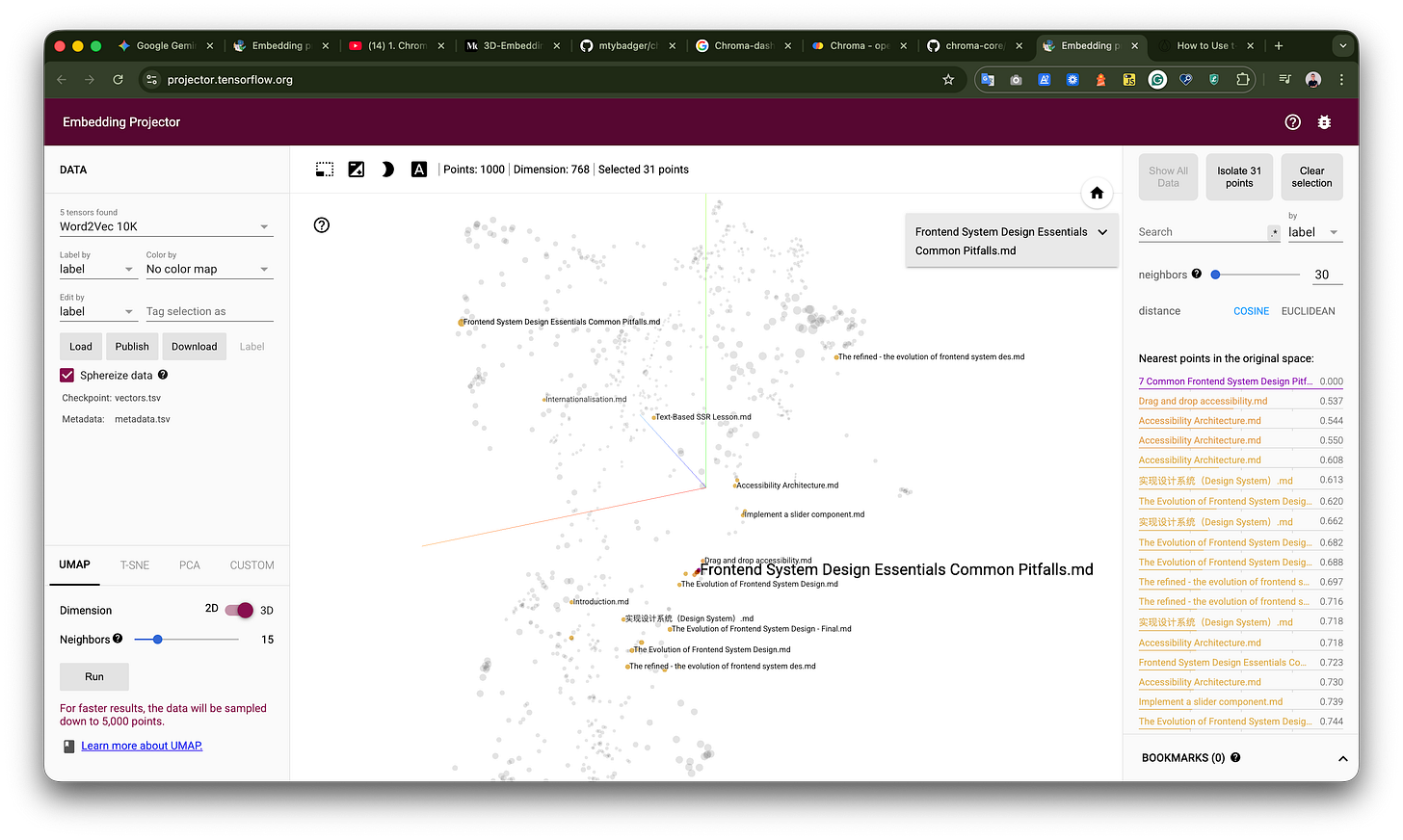

Over the next several issues, I’ll walk through how to build a small agent system from scratch. We’ll start with a very simple REPL, then gradually add tools, introduce a simple plugin pattern, build a memory system with embeddings and RAG, and later explore routing and planning.

The final result will be a small personal knowledge assistant. You may find it directly useful for your own workflow, but the bigger goal is to understand the architecture behind an agent system. Once the moving parts are clear, you can apply the same ideas to your own projects.

In the current AI trend, there are many resources, tutorials, and demos. But I still find it hard to find practical guides that show what is really happening under the hood, step by step. That is the gap I hope this series can help fill.

In this issue, we’ll start with the skeleton: the smallest useful shape of an AI agent.

I also recorded a YouTube version of this issue, where I build the first version step by step. If you prefer watching the code come together, you can check out the video here: YouTube video

If you’ve been hearing people talk about AI agents, you might be wondering what is actually going on under the hood. The term can sound vague, especially when people use it to describe everything from coding assistants to workflow automation tools. But at the core, the idea is surprisingly simple.

An agent is a program with a loop. It reads input, decides what to do next, takes an action, observes the result, and then repeats.

That loop is the foundation. Once you can see it clearly, many agent systems become much easier to understand.

The goal here is not to build a production-ready framework. The goal is to make the moving parts visible. We’ll start with a tiny program, connect it to a local language model, and then give it one real tool so it can fetch live weather data.

By the end, we’ll have the basic skeleton of an agent.

Why Start Small?

When people talk about AI agents, the examples often become complicated very quickly. There may be multiple tools, memory, planning, file access, browser automation, background tasks, or code execution.

These are useful capabilities, but they can also hide the simple idea underneath.

So instead of starting with a full assistant, let’s start with the smallest possible version: a command-line program that keeps asking for input.

async function main() {

const rl = createInterface({ input, output });

while (true) {

// 1) Read

let line = await rl.question("You> ");

// 2) Parse / branch (commands vs normal input)

if (shouldExit(line)) break;

// 3) Act — print out the line

output.write(`Assistant> ${line}\n\n`);

}

rl.close();

}You type something. The program reads it. It does something with that input. Then it waits for the next line.

That is already a loop.

At this stage, there is no intelligence involved. The program simply echoes the input back to you. If you type:

helloIt replies:

helloObviously, this is not useful yet. But the structure matters. The program is already doing the essential things we need:

read input

decide what to do

take an action

repeatThis is the skeleton we’ll keep improving. The “decide” step is trivial for now, and the “action” step is also trivial. But the shape of the system is already there.

Replacing Echo with a Model Call

The next step is to replace the echo behavior with a language model call. Instead of sending the user input directly back to the terminal, the program sends it to a model.

async function main() {

const rl = createInterface({ input, output });

/** @type {{ role: string, content: string }[]} */

const messages = [];

while (true) {

let line = await rl.question("You> ");

// omitted the checks

messages.push({ role: "user", content: trimmed });

const data = await ollamaChat(messages);

const assistant = data?.message;

const reply =

assistant?.content?.trim?.() ??

"(no text from model — check model / Ollama logs)";

// Keep transcript so the model has context on the next turn.

messages.push({ role: "assistant", content: reply });

output.write(`\nAssistant> ${reply}\n\n`);

}

}For this example, I use Ollama. If you haven’t used Ollama before, you can think of it as a local model server. It runs a model on your machine and gives your application an API to talk to it.

That means my Node.js program doesn’t need to contain the model itself. It only needs to send a request to Ollama. Ollama runs the model locally and sends the response back.

The loop itself has not changed. Only the action has changed.

Before, the program echoed the input back to the terminal:

user input -> echo backNow, the program sends the input to the model and prints the model response:

user input -> send to model -> print model responseAt this point, the program becomes a simple local chat app. It can receive input, send it to a local model, and print the response.

To make the conversation work properly, we also need to keep a list of messages. This message list is the transcript of the conversation. Each message usually has a role and some content:

system -> instructions or rules

user -> what the human says

assistant -> what the model says backThe transcript matters because the model needs context. If we only send the latest user input, the model won’t know what happened earlier in the conversation. By keeping the message history, we give the model enough context to continue naturally.

So far, we have built something useful, but it still has an important limitation. The model can only respond based on what it already knows or what we provide in the prompt.

If we ask:

What's the weather in Melbourne right now?The model cannot reliably answer that on its own. It might guess, give a generic answer, or say it doesn’t have access to real-time information.

This is where tools become important.

Why Tools Matter

A language model does not automatically fetch live data. It does not automatically call APIs, read your calendar, check the weather, or update a database.

What it can do is decide that a tool is needed. Then your application executes that tool.

This distinction is important. The model does not run the tool directly. It produces a structured request that says, in effect:

I need to call this tool with these arguments.Then the application decides what to do with that request.

For example, if the user asks for the current weather, the model might request a get_weather tool with a location:

tool: get_weather

arguments: { location: "Melbourne" }The application then runs the actual tool, gets the weather data, and sends the result back to the model. Only after that does the model produce the final answer for the user.

This is the point where a simple LLM chat program starts to become an agent. Not because the model suddenly becomes magical, but because the program now has a loop around the model that can take actions in the outside world.

Adding the First Tool

Let’s add one real tool: get_weather.

In my example, I already have a small local command-line tool for weather. It takes a location and prints weather information. From the agent’s point of view, this command-line tool becomes a capability called get_weather.

But the model needs to know this tool exists. So when the program sends a request to Ollama, it includes a tool definition alongside the messages.

const tools = [

{

type: "function",

function: {

name: "get_weather",

description:

"Fetch current weather for a place using OpenWeather. Prefer 'City,CC' (ISO country) when ambiguous.",

parameters: {

type: "object",

required: ["location"],

properties: {

location: {

type: "string",

description:

'Query for the city, e.g. "Tokyo,JP", "New York,US", or "Melbourne,AU".',

},

},

},

},

},

];Conceptually, the program is saying:

You can answer normally.

But if you need live weather information,

you are allowed to call get_weather with a location.Now the model has another option. Instead of returning only normal text, it can return a tool call.

For example, the user might ask:

What’s the weather in Melbourne right now?The model may respond with a structured request:

Call get_weather with location = "Melbourne"Again, the model is not running the weather command. The Node.js program is. In this example, that Node.js program is the agent runtime we are building.

The runtime receives the tool call, runs the weather CLI, captures the result, and adds that result back into the conversation as a tool message. Then the program calls the model again.

This time, the model has the weather data in the transcript, so it can produce a grounded answer for the user.

The flow looks like this:

User asks a question

↓

Model decides whether a tool is needed

↓

Application executes the requested tool

↓

Tool returns the result

↓

Application sends the result back to the model

↓

Model produces the final answerThis is why an agent is usually not just a single model request. It is a loop.

The model decides what should happen next. The runtime performs the action. The result is fed back into the model. Then the model decides again.

The Agent Loop

If we simplify the whole system, the agent loop looks like this:

while the conversation is active:

read user input

send messages and tools to the model

check the model response

if the model requests a tool:

execute the tool

add the tool result to the messages

call the model again

otherwise:

show the final response to the userThat is the core idea.

An LLM by itself is mostly text in and text out. An agent is the loop around the LLM. That loop allows the system to decide when to use tools, execute actions, observe results, and continue.

Once you understand this loop, many agent systems become less mysterious. They may have more tools. They may have memory. They may plan multiple steps. They may interact with files, browsers, APIs, or codebases.

But the basic shape is still the same:

decide

act

observe

repeatTakeaway

The main takeaway is this: an LLM alone is mostly text in and text out, while an agent is the loop around the LLM that can decide when to use tools and take actions.

This example is intentionally small. It is not meant to be a full assistant or a production-ready framework. It is meant to make the moving parts visible.

Once the skeleton is clear, we can start adding more realistic capabilities.

For example, instead of only fetching weather data, we could add a Google Calendar tool. Then the agent could not only answer questions, but also inspect events, find free time, or create calendar entries.

That is where this series is heading.

We’ll start from this small loop, then gradually add more tools and design decisions around it, so the agent system grows step by step instead of becoming a black box.

If you are building your own agent system, I think this is the best place to start: not with a framework, but with the loop.

Once you understand the loop, frameworks become easier to evaluate. You can ask better questions:

Where is the model call?

Where are the tools defined?

Who executes the tool?

How is the result passed back?

How does the loop stop?Those questions help you see the system more clearly. And that clarity is more useful than any magic-looking demo.

In the next issue, we’ll take this skeleton one step further and look at how to make tools easier to add and manage. That is where the system starts to feel less like a demo and more like a small framework you can extend.

If this topic is useful to you, leave a comment and let me know what kind of agent tool you’d like to see added next. Weather is a simple starting point, but calendar, notes, search, and personal knowledge tools all open up interesting design questions.